LZMA2, released in 2009, is a refinement of LZMA. Depending on the exact implementation, other compression steps may also be performed. The output from this compression is then processed using arithmetic coding for further compression.

It uses a chain compression method that applies the modified LZ77 algorithm at a bit rather than byte level. Lempel-Ziv Markov chain Algorithm (LZMA), released in 1998, is a modification of LZ77 designed for the 7-Zip archiver with a. It is an entropy encoding method that assigns codes based on the frequency of a character.

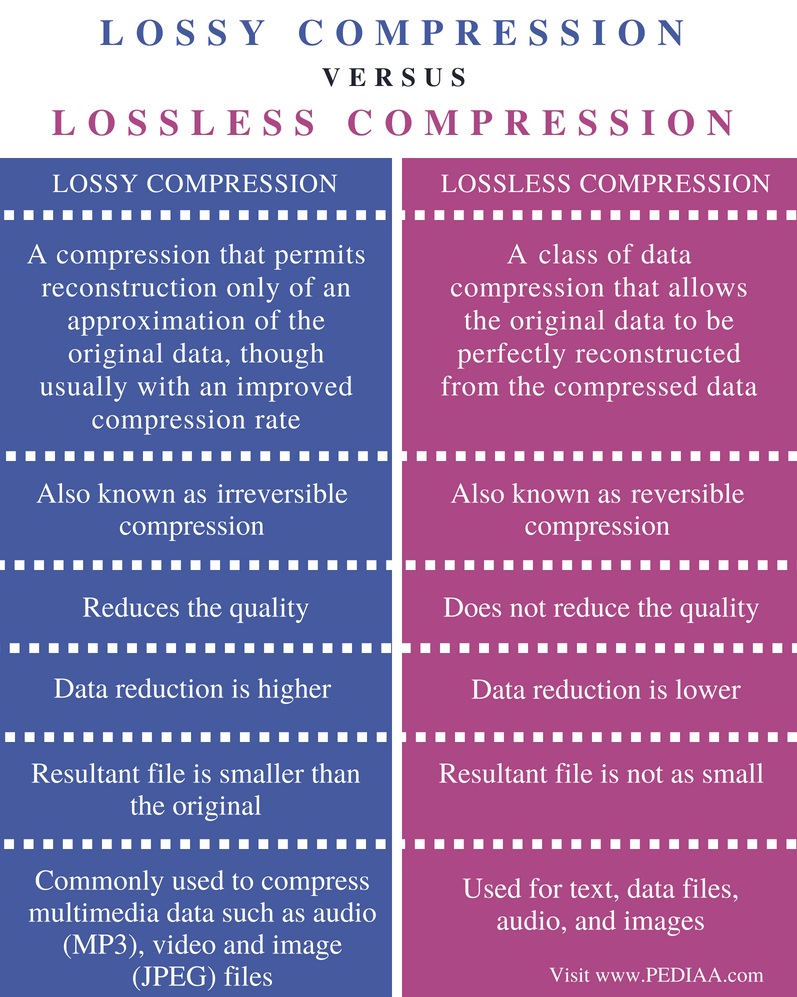

Huffman coding is an algorithm developed in 1952. DEFLATEĭEFLATE, released in 1993 by Phil Katz, combines LZ77 or LZSS preprocessor with Huffman coding. This method is commonly used for archive formats, such as RAR, or for compression of network data. LZSS also eliminates the use of deviating characters, only using offset-length pairs. If it doesn’t, the input is left in the original form. It does this by including a method that detects whether a substitution decreases file size. Lempel-Ziv-Storer-Szymanski (LZSS), released in 1982, is an algorithm that improves on LZ77. When used non-linearly, it requires a significant amount of memory, making LZ77 a better option. It is designed as a linear alternative to LZ77 but can be used for any offset within a file. LZR, released in 1981 by Michael Rodeh, modifies LZ77. In this example, the substitution is slightly larger than the input but with a realistic input (which is much longer) the substitution is typically considerably smaller. You can see a breakdown of this process below. For example, a file containing the the string "abbadabba" is compressed to a dictionary entry of "abb(0,1,'d')(0,3,'a')". This includes an indication that the phrase is equal to the original phrase and which characters differ.Īs a file is parsed, the dictionary is dynamically updated to reflect the compressed data contents and size. Run-length-the number of characters that make up a phrase.ĭeviating characters-markers indicating a new phrase. Offset-the distance between the start of a phrase and the beginning of a file. In this method, LZ77 manages a dictionary that uses triples to represent: LZ77, released in 1977, is the base of many other lossless compression algorithms. There is a variety of algorithms you can choose from when you need to perform lossless compression. These algorithms enable you to reduce file size while ensuring that files can be fully restored to their original state if need be. Lossless compression algorithms are typically used for archival or other high fidelity purposes. In this article, you will discover six different types of lossless data compression algorithms, and four image and video compression algorithms based on deep learning. The type you use depends on how high fidelity you need your files to be. Lossy methods permanently erase data while lossless preserve all original data. When compressing data, you can use either lossy or lossless methods. This is accomplished by eliminating unnecessary data or by reformatting data for greater efficiency. Data compression is the process of reducing file sizes while retaining the same or a comparable approximation of data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed